This days I did some migration work. For experimental purpose I configures my old MOSS 2007 demo machine to use ASP.NET SQL FBA including MySites and profiles for the FBA users.

First I migrated the old Shared Service Provider config db as new User Profile Service App profile DB.

1.

Then I migrated the content databases of a demo web app and the dedicated mysites web app.

After that I configured FBA for both web apps.

The next step was to migrate the “old” user accounts to claims accounts.

Look at the content databases. This is how the “UserInfo” table look before migration:

On this point I need to ensure that the web application is already set up to use FBA. The role provider and membership provider names *must* be the same as in 2007!!!!!!!!!

Therefore I executed

$webApp.MigrateUsers($true)

on both web apps. ($webApp is an object that I retrieved by using cmdlet Get-SPWebApplication).

After that the content databases UserInfo table looks like this:

THERE IS A PROBLEM!!!! Look at this claim login for example:

According to Wictors description of the claim structure:

… this is WRONG!!! “i:0#.f” indicates a user logon name. But “allfbausers” is a FBA role!

The “i:0#.f” must be translated to “c:-.f” which means:

| c: | – | . | f |

| Other Claim | is a role | datatype is string | claim is forms AuthN |

It must be migrated manually by using this PowerShell script:

If you do not do this step your “old” FBA roles will not work as expected!!! This was my big issue the last days until I figured out that roles are translated to claims the same way as user identities… This was of course not correct.

After executing the script the content database looks like this:

2.

The next step is to migrate the profiles in the User Profile Service App…

Before migration the UserProfile_Full table of the User Profile Service Apps “Profile” database looks like this:

Then I executed the “MigrateFormsLegacyUsersToFormsClaims” on the User Profile Service Application using PowerShell.

$upa = Get-SPServiceApplication | where-object {$_.Name -eq $upaName} $upa.MigrateFormsLegacyUsersToFormsClaims() $upa.Upgrade()

$upaName contains the name of the existing User Profile Service App.

If you get an error in the ULS log like this:

Error messages:

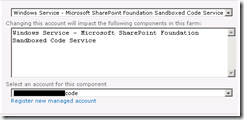

- Exception occured while connecting to WCF endpoint: System.ServiceModel.Security.SecurityAccessDeniedException: Access is denied.

- UserProfileApplicationProxy.InitializePropertyCache: Microsoft.Office.Server.UserProfiles.UserProfileException: System.ServiceModel.Security.SecurityAccessDeniedException

- Failure retrieving application ID for User Profile Application Proxy ‘User Profile Service Application Proxy’: System.NullReferenceException: Object reference not set to an instance of an object.

- Failure retrieving application ID for User Profile Application Proxy ‘User Profile Service Application Proxy’: System.NullReferenceException: Object reference not set to an instance of an object.

- MigrateFormsLegacyToFormsClaims.Migrate: User: AspnetSqlMembers:employee1, Failed to migrate to: i:0#.f|aspnetsqlmembers|employee1, Exception: System.ArgumentNullException: Value cannot be null. Parameter name: userProfileApplicationProxy

…you need to assign “Full Control” permissions to the User Profile Service App for the executing user! Otherwise you are not able to convert the users!

After executing the script above the database looks like this:

3.

I’ve assembled all scripts for this article in one PowerShell script. You can download it here:

http://gallery.technet.microsoft.com/PowerShell-script-to-25b971ba